Deploying Backstage in Kubernetes With Enterprise-Grade Governance and Automation¶

Introduction To Backstage¶

Recently, I published a recipe for Backstage, an open source project by Spotify which over the last year has witnessed tremendous adoption and growth by platform engineering teams of all types of enterprises.

Some of the key features of Backstage include:

- an easy-to-use interface for developers

- extensible plugin ecosystem (for ex. plugins available for GitHub Actions, ArgoCD, AWS, and more)

- ability to easily build and publish tech documentation

- native Kubernetes plugin for cloud-native apps

- ability to compose different developer workflows into an Internal Developer Portal (IDP)

Operationalizing Backstage in the Enterprise¶

While setting up Backstage for one or two developers is simple, operationalizing it for enterprise scale presents its own set of challenges. Some of these include:

- enforcing consistent and validated deployments of Backstage: even though anyone can theoretically install Backstage using Helm for example, I want to make sure I enforce an installation and deployment pattern as a platform admin that I have validated and ensured is compliant with my IT organization

- enabling seamless deployment on any cluster type: with enterprises running a hybrid or multi-cloud strategy, how do I make sure that Backstage can easily be deployed in any cluster type

- staying up to date with latest versions: as a platform admin, I want to make sure I can stay up to date with the latest versions of Backstage and apply updates across my cluster fleet

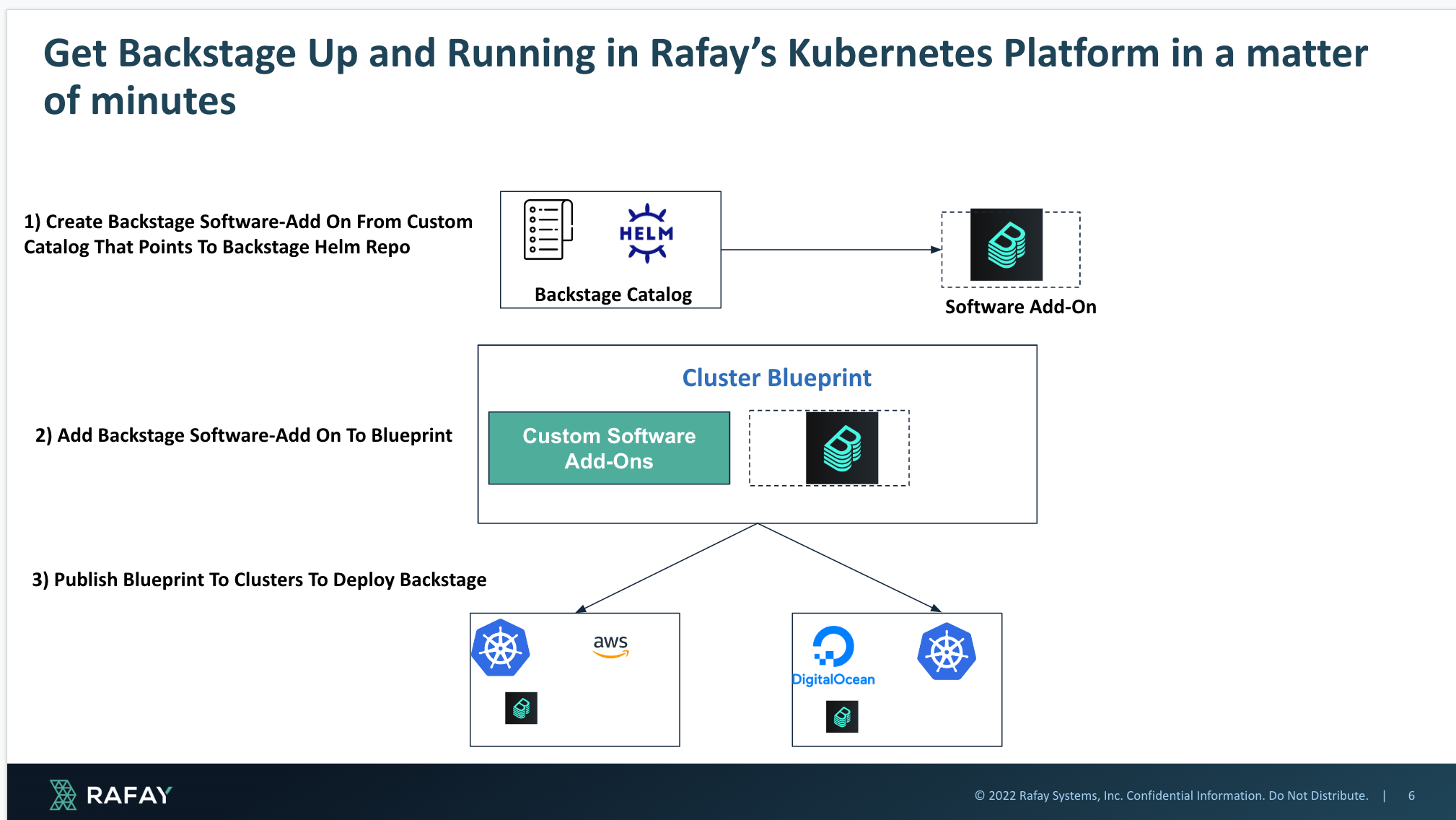

These challenges are definitely complex and can take many platform teams months to figure out. However with Rafay's native add-on and blueprint constructs, platform teams can enforce automation and governance while enabling developer self-service with Backstage in a matter of minutes using the 3-step process seen below:

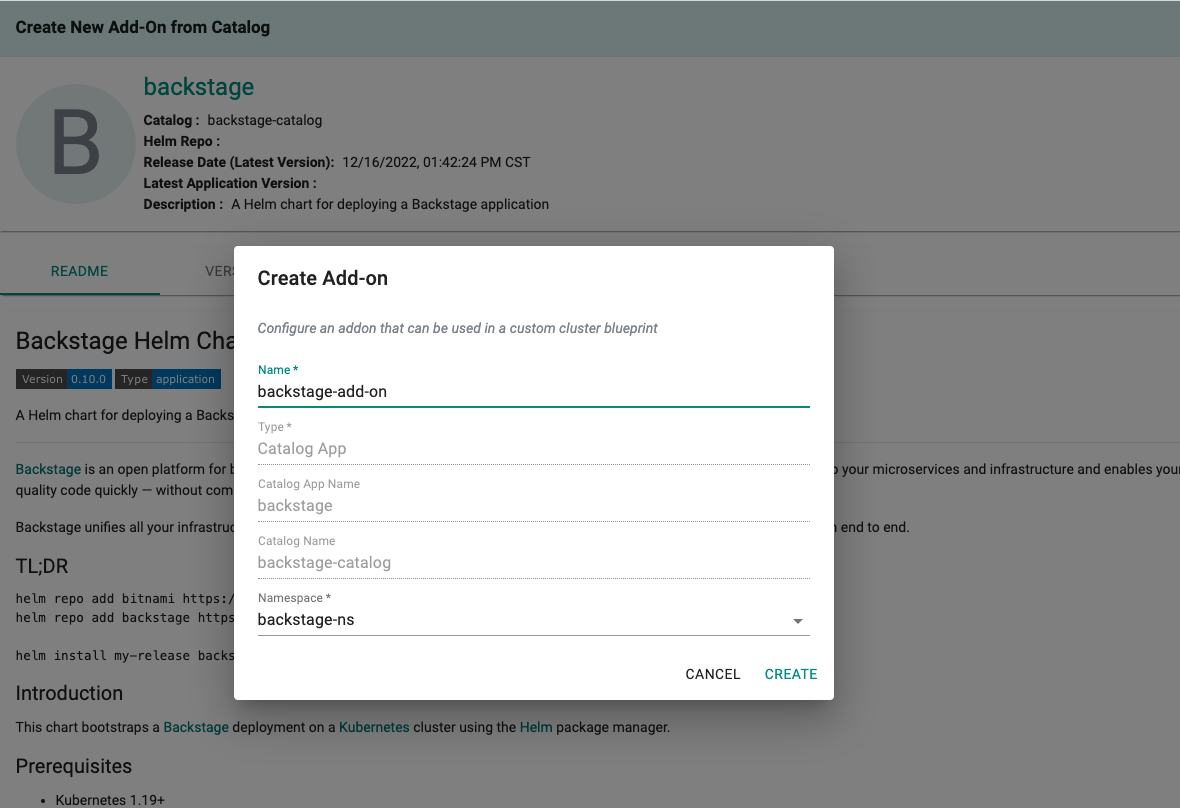

1) Create a custom software catalog pointing to Backstage's Helm repo. Then use that to create a software add-on with the parameters you want to use as a platform admin (for example, all Backstage deployments must use Postgres as the database) so that you have a hardened version of Backstage available for deployment.

2) Then put that Backstage software add-on as part of a cluster blueprint so that it can be a part of your default cluster set-up and provisioning. Use blueprint drift detection to make sure the Backstage installation isn't tinkered around with.

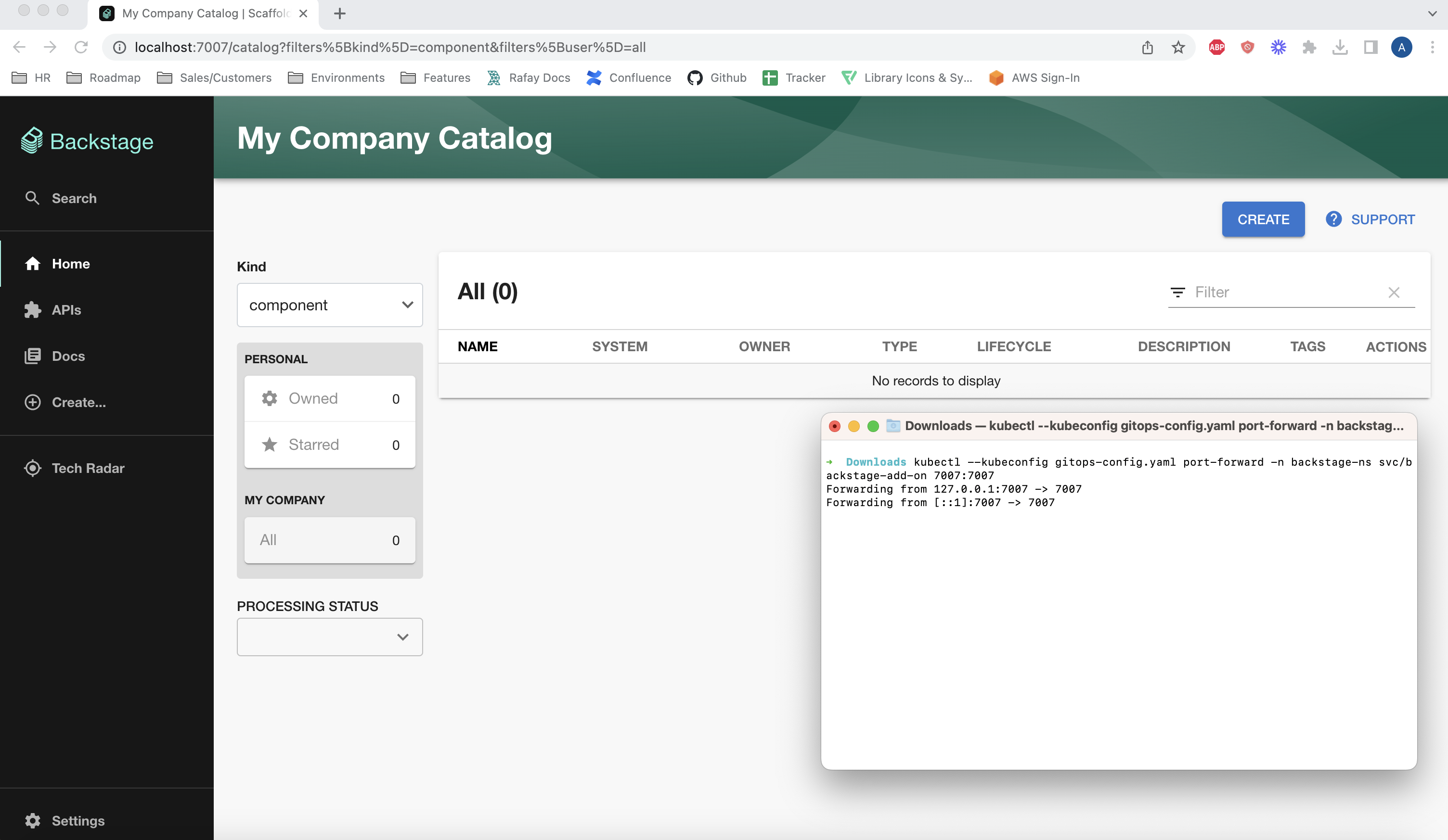

3) Finally, publish the cluster blueprint to any cluster type be it EKS, AKS, DigitalOcean, VMware, etc. Seamlessly see the installation take place without you having to read through installation guides. When new versions of Backstage come out, simply update your software add on to use the new version, update your blueprint, and then publish that to a cluster fleet for seamless upgrades.

How To Get Started With This Methodology in Rafay¶

Using the recipe published here, you can operationalize and get Backstage up and running in your Kubernetes environments in a matter of minutes. See the YouTube video to see this up and running in action in 10 minutes: