Overview

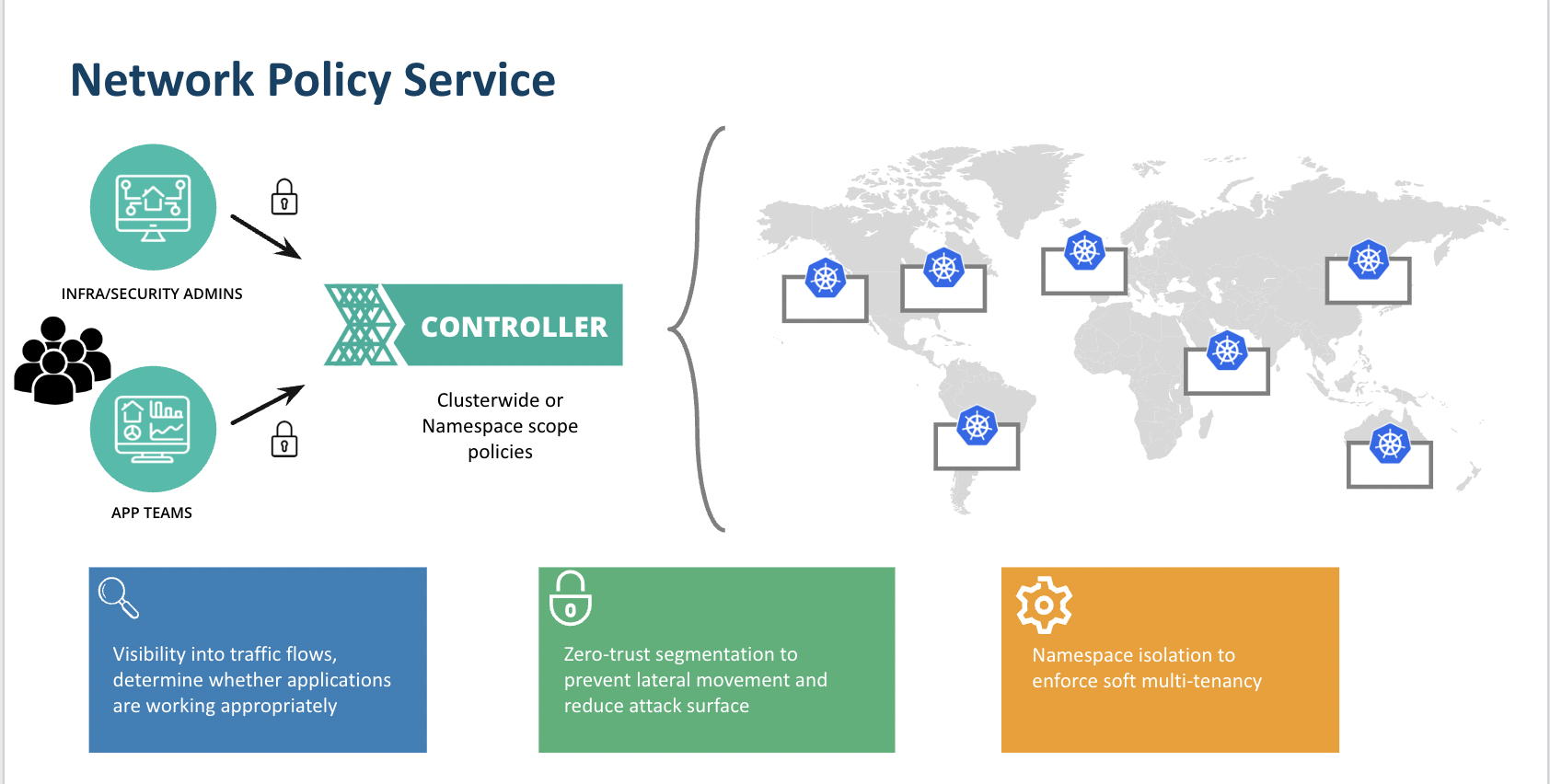

The Network Policy service is an out-of-the-box offering that allows platform teams and developers to build zero-trust network security and visibility into their Kubernetes environments without sacrificing governance, access control and performance. Some of the key advantages of this solution include:

- Standardized installation and deployment of network policies across a fleet of clusters via blueprinting including the ability to define defaults to enable a Day 0 zero trust model

- Access controls to network flows based on assigned roles and assets. This allows platform teams to unblock developers from viewing the traffic for their respective applications/namespaces while still maintaining RBAC controls

- Real time visibility and historical network flows for service monitoring and application debugging

- Assignment of policies to multiple levels of infrastructure including clusters and namespaces and ability to fine-tune based on application needs

Visit us here for a quick Network Policy Manager Demo

The offering broadly includes two key features:

- Network Policy management via Cilium including cluster-wide and namespace-scoped policies

- Network Visibility (Layer 4) that is access-controlled based on role

Network Policy Overview¶

Using the console, API, RCTL, or GitOps, an admin can create network policies of the following types:

- Cluster: these policies are scoped at the cluster level and should be used to enforce default sets of rules across the cluster

- Namespace: these policies are scoped at the namespace level and should be used to protect individual pods or applications in a given workspace

The creation of cluster-wide policies versus namespace policies have different workflows and require certain roles. See the RBAC section below to learn more. These policies can then be assigned to the appropriate assets and standardized across your project infrastructure.

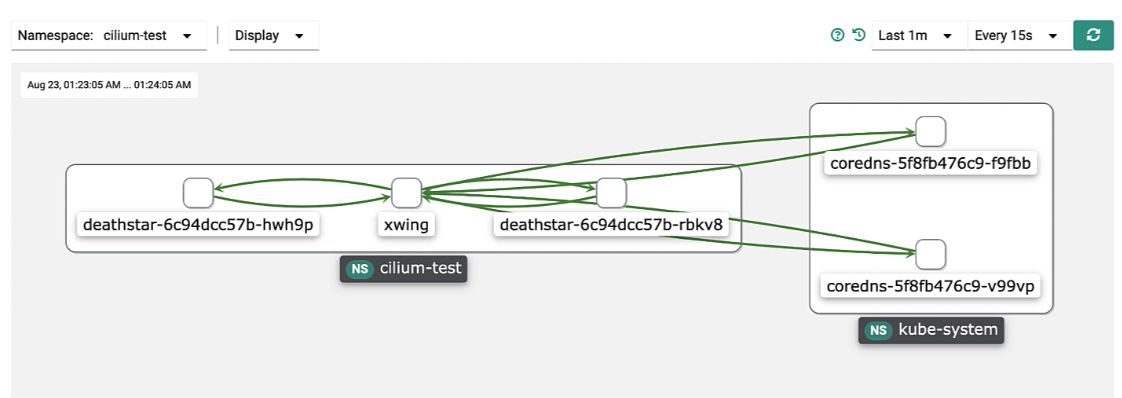

Network Visibility Overview¶

Users have real-time and historical visibility into network traffic flows. This can be used to:

- Validate network access to certain pods/namespaces based on application requirements

- Validate network policy rules are in effect by visualizing network traffic flows

- Troubleshoot applications when network connectivity is down based on real-time and historical workflows

In order for network policy enforcement to take effect, a Container Network Interface (CNI) is required. Network policy solution is built on top of a popular Contained Network Interface CNI called Cilium.

Cilium Overview and integration¶

Cilium is a CNI that is based on eBPF technology. This provides some key advantages including the:

- Ability to scale across clusters with very minimal compromises in performance

- Ability to work with other CNIs via CNI chaining

Cilium Version¶

Today, Cilium 1.12 is the version used for Network Policy Manager.

CNI chaining¶

One of the key functionalities of Cilium that is leveraged with the Network Policy solution is CNI Chaining. This functionality gives Cilium the ability to work with existing CNIs that are already in place, such as AWS-CNI or Azure CNI.

With CNI chaining, the primary CNI is responsible for base network connectivity, IP Address Management (IPAM), etc. The secondary CNI (Cilium in this case) enables network visibility and policy enforcement.

RBAC¶

The following table lists the roles that can access specific components of the Network Policy Management Service.

| Feature | Roles |

|---|---|

| Cluster Network Policies | Infra Admin, Org Admin |

| Namespace Network Policies | Workspace Admin, Project Admin, Org Admin |

For more information on what these roles do generally, see the roles documentation.

Pre-requisites & Considerations¶

Support is based on a combination of cluster type and primary CNI.

-

In order for Network Policy Management to work, because Cilium is installed in chaining mode, admins have to be running a specific primary CNI in their cluster. The primary CNI in the cluster cannot be Cilium as Cilium is installed as a secondary CNI to do network policy enforcement.

-

The following cluster type/CNI combinations are supported (created or imported clusters):

| Cluster Type | Primary CNI | Supported |

|---|---|---|

| Amazon EKS | AWS-CNI | YES |

| Amazon EKS | Calico | YES |

| Azure AKS | Azure-CNI | YES |

| Azure AKS | Kubenet + Calico | YES |

| “Upstream Kubernetes” on Bare Metal and VM Environments | Calico | YES |

| Microk8s | Calico | YES |

-

The Monitoring & Visibility Add-On (Prometheus) is required for viewing traffic flows in the network visibility dashboard.

-

For any pods/workloads that existed pre deployment of Cilium/Network Policy Manager onto the cluster, those pods/workloads must be RESTARTED in order for policies to take effect. New pods/workloads do NOT need to be restarted.