In-place Upgrades to Amazon EKS v1.27 Clusters using Rafay

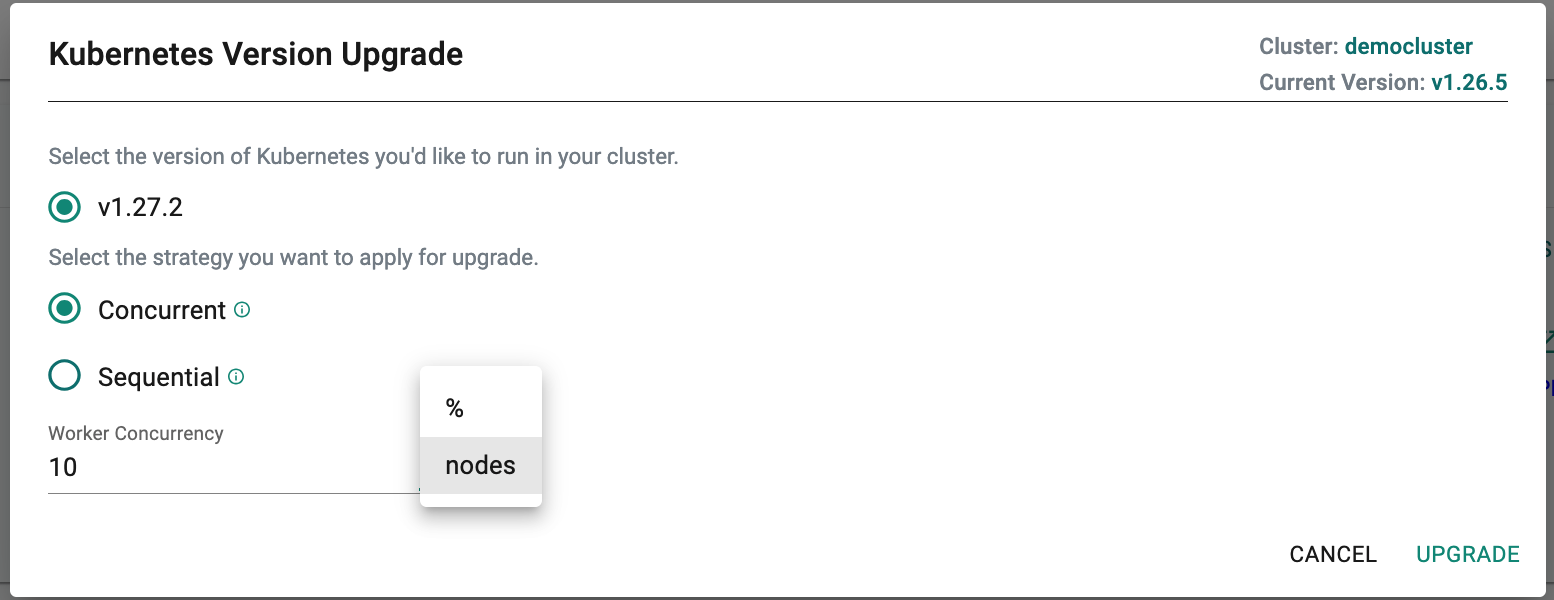

In our recent release, we added support for in-place upgrades of your EKS clusters provisioning based on Kubernetes v1.27.

Our customers have shared with us that they would like to provision new EKS clusters using new Kubernetes versions so that they do not have to plan/schedule for Kubernetes upgrades for these clusters right away. As a result, we generally introduce support for new cluster provisioning for the new Kubernetes version first and then follow up with support for zero touch in-place upgrades.

Note

Organizations that wish to perform sophisticated checks for API deprecation etc are strongly recommended to use Rafay's Fleet Operations for Amazon EKS.